At the beginning of December 2023, the Google DeepMind laboratory presented the long-awaited AI model – Gemini. The team of researchers led by Demis Hassabis carefully hid the details of the project, and this aroused great interest in the tech community. As a result of the presentation, it became clear that DeepMind specialists managed to create the most powerful neural network in its class today. It will become a serious competitor to GPT-4 from OpenAI. Given the relevance of this topic, we decided to cover it in our new article. You will learn what is Google DeepMind, how it appeared and developed, and what technological innovations were created, from AlphaGo to PaLM 2. We paid special attention to the latest AI model, Gemini. In addition, we will talk about the areas of application and impact of the projects of this laboratory on the modern tech industry. We'll also outline the prospects and directions for its future research.

Background of Google DeepMind

The British-American research laboratory Google DeepMind was founded in 2010 by three specialists in the field of artificial intelligence: Demis Hassabis, Shane Legg and Mustafa Suleyman. Its headquarters are located in London. It also has centers in the USA, Canada, Germany and France. DeepMind's first projects were neural network models that could learn to play video games. In January 2014, Google bought DeepMind for $400 million and took over control of the startup. That same year, it received the "Company of the Year" award from Cambridge Computer Laboratory.

A year after joining Google, the founders of DeepMind began negotiations with the corporation, wanting to gain more freedom in their activities. In 2017, they even tried to secede, but this attempt was unsuccessful. In addition to working on main projects, the laboratory mastered such an important area as the ethics of artificial intelligence. It established the AI Research Ethics Board and opened a new division called DeepMind Ethics and Society. One of its advisers was the famous philosopher Nick Bostrom.

In December 2019, one of the founders of the startup, Mustafa Suleyman, left it and moved to Google. In April 2023, the laboratory merged with Google Brain, a division engaged in developments in the field of AI. The new structure was called Google Deep Mind. This merger allowed the corporation to accelerate the development of its own generative neural network, which could become a worthy competitor to GPT-4 and ChatGPT from OpenAI. At the same time, it put an end to the period of conditional autonomy of the London laboratory, finally subordinating it to the Google corporation.

Technological Innovations and Breakthroughs

Let's take a closer look at the most interesting thing: how does Google DeepMind work and what it has already done. Since 2014, the company has regularly released innovative products in the field of artificial intelligence. Its first creation was the AlphaGo program, trained to play Go at a high level. In 2016, it launched the AlphaFold project, designed to solve problems in molecular biology. At the same time, the laboratory introduced the WaveNet text-to-speech system.

In 2019, DeepMind developed the AlphaStar neural network, which can play the popular strategy game StarCraft III. In 2022, it released the AlphaCode AI model, capable of writing code for computer programs. That same year, it created the Gato multitasking neural network, which can perform a number of actions simultaneously. Finally, in December 2023, the laboratory presented the acclaimed Gemini AI model. As of now, this is Google’s most massive and powerful neural network.

AlphaGo

The laboratory's first development became a harbinger of a new era of AI systems. Google AlphaGo, released in 2014, was trained to play high-end Go. In subsequent years, it successfully beat several champions in it. The program combines deep neural networks with advanced search algorithms. In training, it honed its skills through thousands of games with different versions of itself and constantly learned from mistakes.

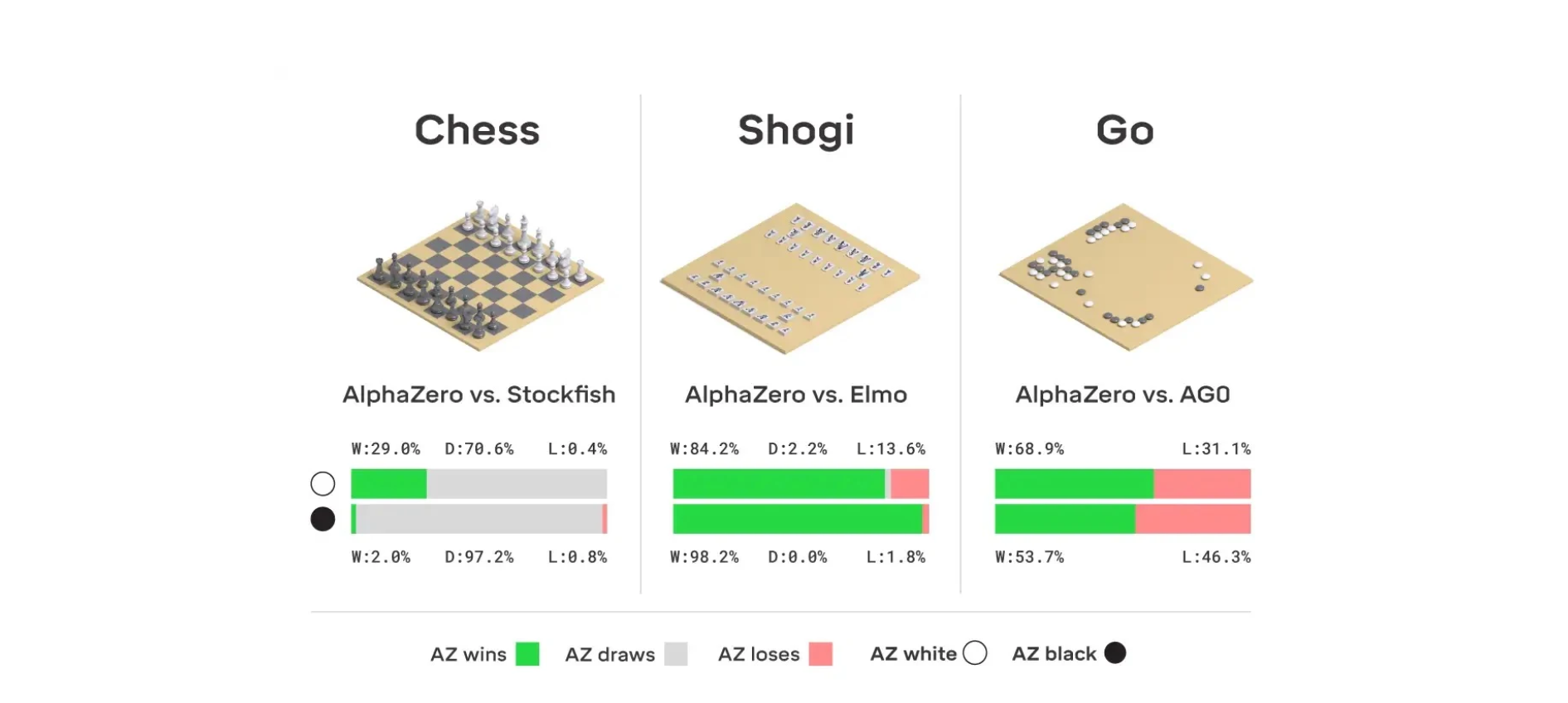

AlphaZero и MuZero

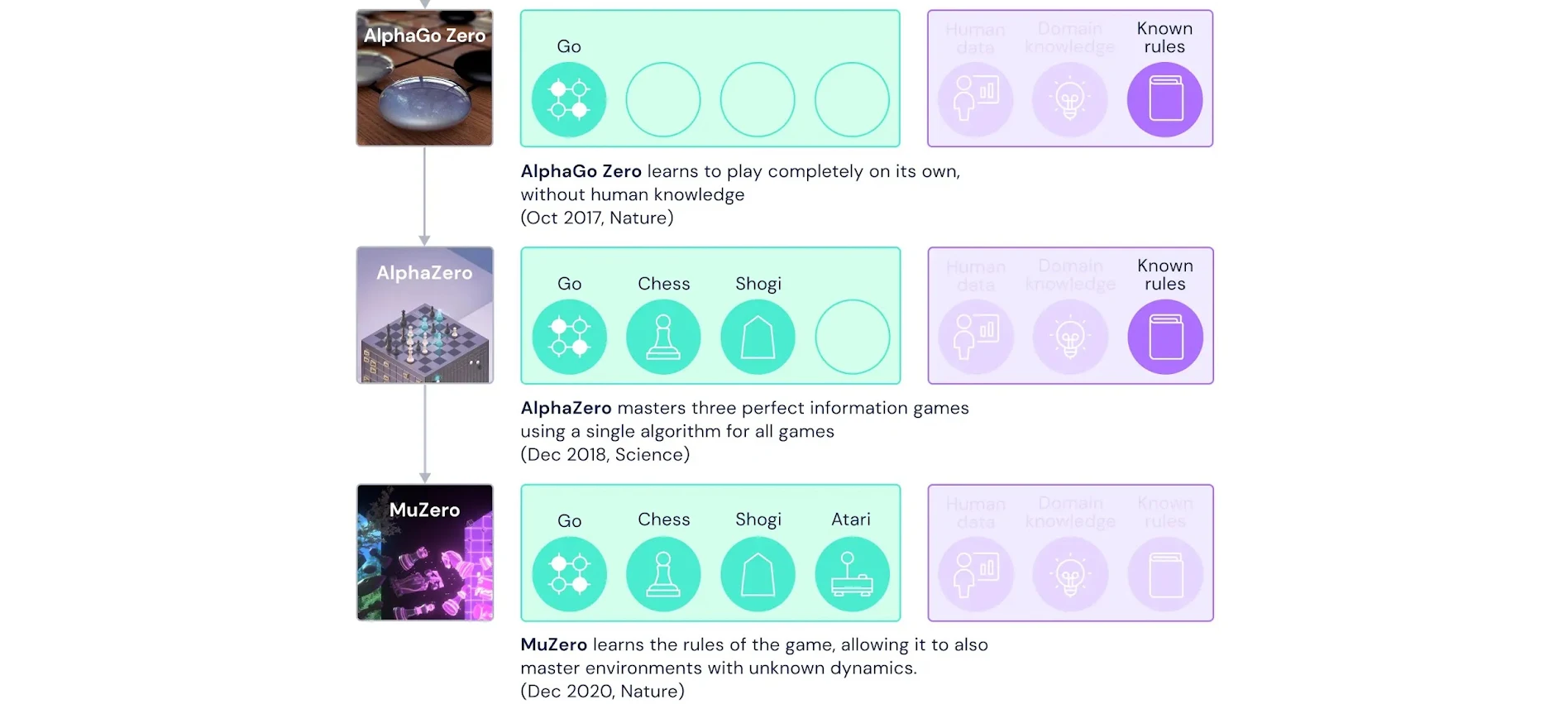

In 2017, researchers introduced an improved version of AlphaGo called AlphaZero. It already plays several board games professionally, including Go, chess and shogi. AlphaZero's algorithms are easily self-learning. The developers loaded the game rules into the program, after which it played millions of games against itself and quickly achieved mastery.

DeepMind then introduced a new AI model, MuZero. It is capable of playing Go, chess, shogi and Atari video games. It does not need to explain the rules of the games. The neural network itself studies models of its environment (the game) and plans the best combination of actions.

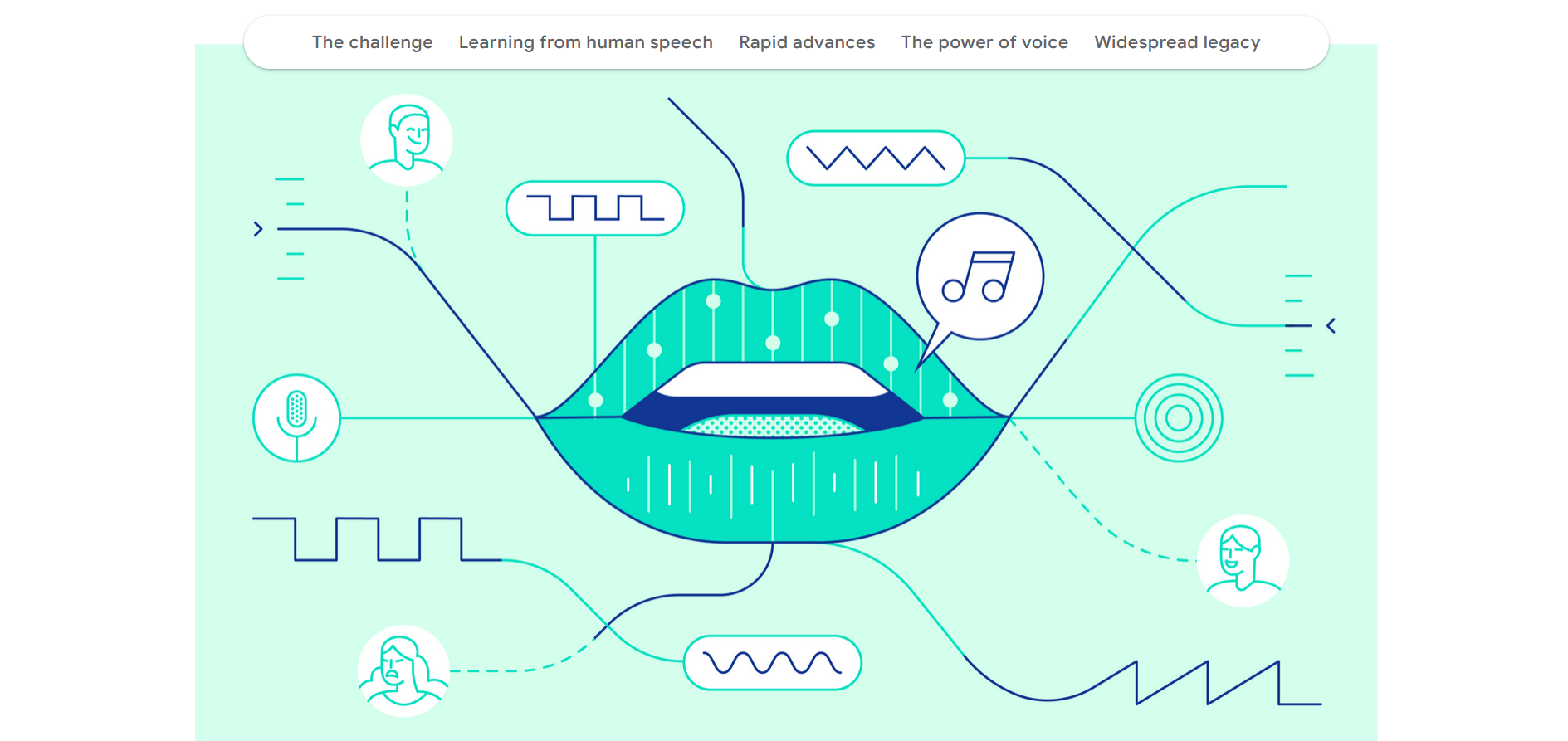

WaveNet

In 2016, Google DeepMind introduced WaveNet, one of the first AI models on the market for generating natural-sounding speech. A year later, it was implemented in a number of publicly available applications, including Google Assistant. In 2018, Google released a service for voicing Cloud Text-to-Speech based on WaveNet. Subsequently, DeepMind and Google AI prepared an improved WaveRNN neural network.

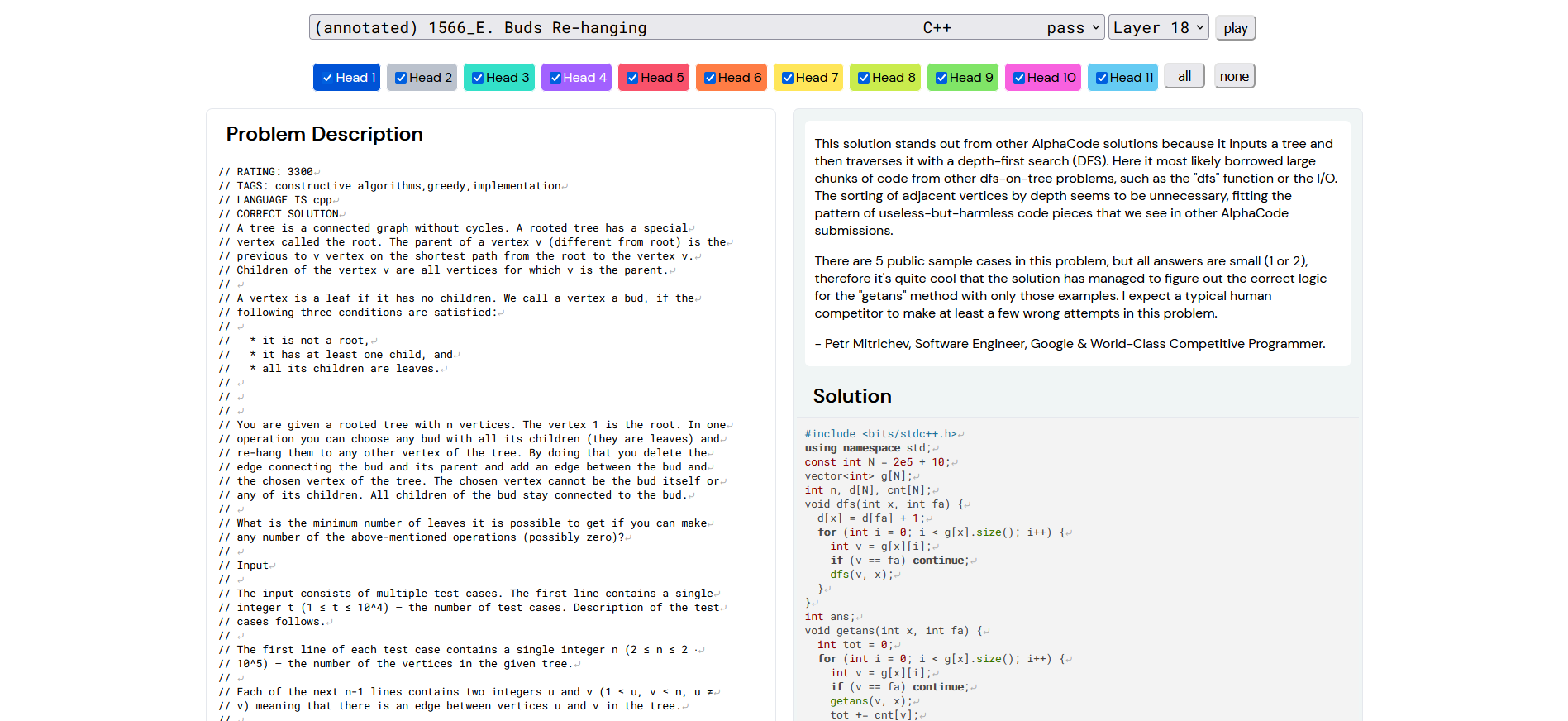

AlphaCode

In February 2022, the laboratory released another AI system, AlphaCode, capable of automatically generating program code at the level of an average developer. Data from GitHub and Codeforce were used to train it. The neural network is trained to find a unique solution without duplicating previously proposed options. In December 2023, DeepMind released AlphaCode 2, based on the Gemini neural network. The updated version of the system acquired dynamic programming skills that were not available to its predecessor. Based on the test results, AlphaCode 2 was among the top 15% of programmers who participated in a coding competition on the Codeforce platform. The new product has shown decent results in programming in Python, Java, C++, and Go. It also successfully solved mathematical and information technology problems.

Imagen

In May 2022, Google introduced Imagen. This text-to-image AI system generates photorealistic images based on text queries. It leverages the power of large transformative language models to understand text and produces high-quality images using efficient diffusion models. Imagen has passed DrawBench's comprehensive test of the performance of text-to-image algorithms. According to its results, it surpassed DALL-E 2 and other similar neural networks.

Phenaki

Created by DeepMind, the text-to-video model Phenaki was introduced in October 2022. The developers have endowed it with the ability to synthesize realistic videos from text queries. One of its key components is the encoder-decoder model, which compresses video into discrete attachments or tokens using a tokenizer. This allows the neural network to create videos of a user-specified length. It also uses a transformer model to transform text into video tokens. They are subsequently detokenized into MP4 videos.

PaLM 2

The PaLM 2 language model (predecessor to Gemini) was released in May 2023. It is reported to contain 340 billion parameters and was trained on 3.6 trillion tokens. The neural network solves problems in mathematics and coding, classifies and answers questions. Also, it translates and generates text in different languages better than previous AI models from Google, including PaLM. Before the release of Gemini, this program was used in the Bard chatbot. In addition, it is available for integration with third-party software via the PaLM API.

Gemini

Although DeepMind Google has been active developing in the field of generative AI, but it has lagged the industry leader — famous OpenAI. At the end of 2020, it was created an artificial intelligence-enabled chatbot called Gopher. However, the company decided not to present it to the audience. It often gave inaccurate answers (“hallucinations”), inherent in many neural networks. Google announced its own AI chatbot, Bard, in February 2023, but it was clearly inferior to competitors and did not attract much interest from the community. In May, DeepMind released an improved version of Bard called PaLM 2, but it also failed to withstand GPT-4.

A real breakthrough compared to previous projects was the multimodal AI model Gemini, developed from scratch. It processes, analyzes, summarizes and combines text, code, images, audio and video. The model is compatible with various equipment – from data centers to mobile devices. According to the developers, their new neural network outperformed GPT-4 in 30 out of 32 performance assessment standards. They included testing in 57 subjects (mathematics, physics, medicine, law, history, ethics, etc.), where both theoretical knowledge and problem-solving skills were tested. Additionally, there were assignments on programming in Python.

Gemini 1.0 will be available in three variants:

- Gemini Ultra. The full-featured and most powerful version for solving the most complex problems.

- Gemini Pro. A balanced version for a wide range of tasks.

- Gemini Nano. A less resource-intensive on-device version, suitable for mobile devices (including the latest Google Pixel).

The new AI model has already been built into the Google Bard chatbot, where it replaced the Google PaLM 2 neural network. The capabilities of the Gemini-controlled bot were demonstrated at the product presentation. It gained advanced comprehension, planning and reasoning skills, according to the DeepMind team. Users from 170 countries will have access to the updated Bard in English, except the UK and EU countries due to restrictions from local regulators. The Pro version is available to individual developers and companies starting December 13. The flagship version of Gemini Ultra will open to the public in early 2024 after safety checks.

Applications and Real-World Impact

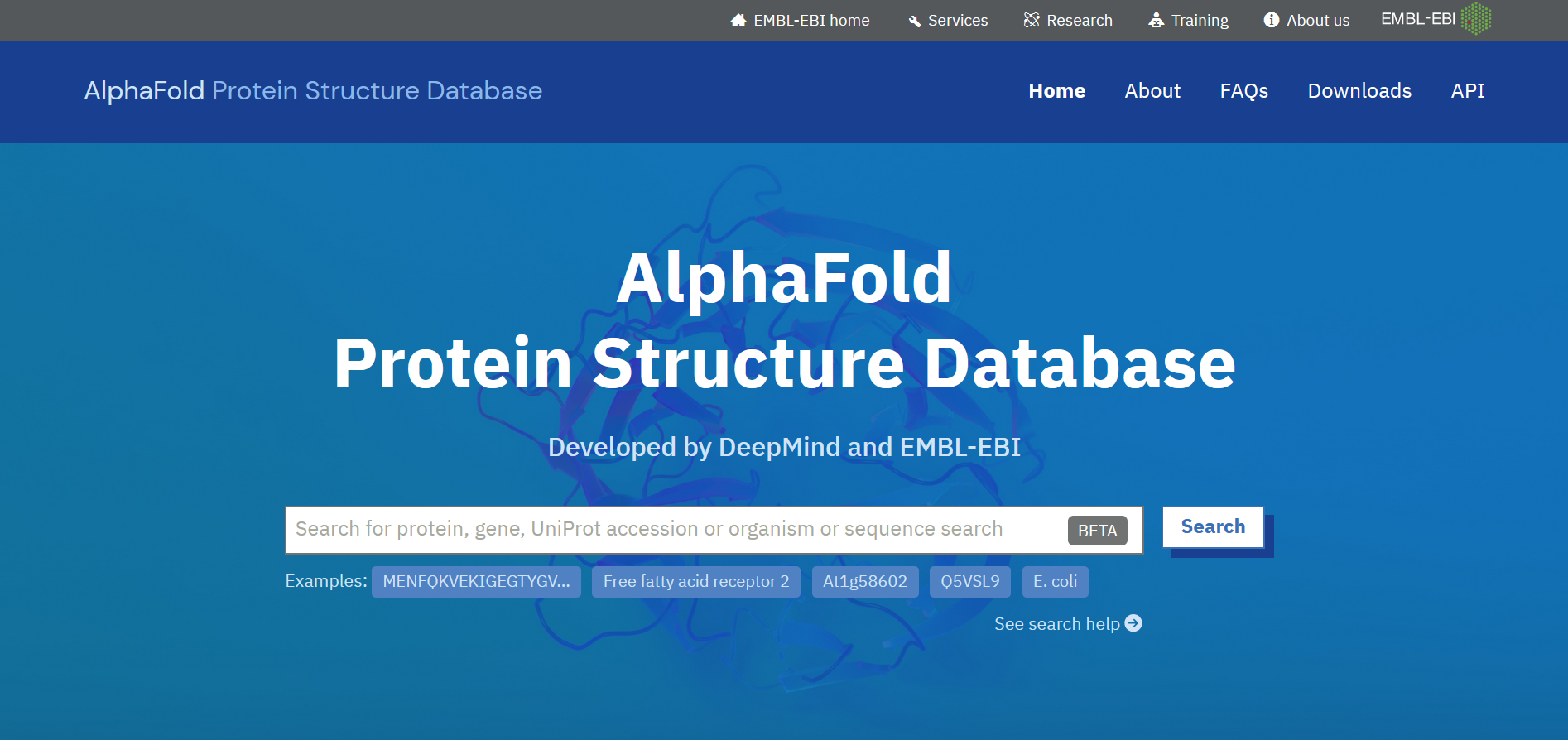

Google DeepMind AI technologies are used in biology, energy, medicine, sports, archeology and other fields. One of the most striking examples of their application is the AlphaFold AI model. It has helped accelerate research in almost every area of biology.

Developed in 2016, it solved the problem of protein folding. Today, the AlphaFold database contains information on the structure of more than 200 million proteins. Thus, artificial intelligence has found the answer to an important scientific question that has occupied the minds of many scientists for decades. In the future, this may provide the key to the invention of medicine for currently incurable diseases or solving the problem of plastic pollution on the planet.

Another example of the use of DeepMind products in science is the Graph Networks for Materials Exploration (GNoME) deep learning model. It helped scientists discover 2.2 million new crystals, including 380,000 stable materials most suitable for experimental synthesis. The materials discovered using GNoME have the potential to develop a number of promising technologies. These include superconductors, supercomputers and more energy-efficient next-generation batteries.

The AI models developed by DeepMind laboratory have found application not only in science, but also in other areas:

- Increasing the efficiency of cooling systems in Google data centres.

- Helping personalize Google Play app recommendations.

- Modelling the actions of football players using heat maps and cluster analysis.

- And much, much more.

Future Prospects and Directions

Despite outstanding achievements in many areas, the Deep Mind Google team does not rest on its laurels. It is actively opening new prospects for human progress with the help of AI technologies. One of the current areas of research in this laboratory is the creation of enzymes that decompose plastic, which can make it 100% recyclable. The researchers' interests also include combating the early signs of Parkinson's disease using the capabilities of the AlphaFold AI model. Its predictions could help find new treatments for the disease, which affects more than 10 million people worldwide.

In addition, AlphaFold's capabilities are now being used to develop a more effective malaria vaccine. According to scientists working on this project, the neural network from DeepMind has made it possible to take it to a new level. If previously it was at the stage of fundamental science, now it is at the stage of preclinical and clinical development. Separately, it is worth noting the use of the laboratory’s AI technologies in the Project Euphoria. They will allow us to better understand people with speech problems. It involves training speech recognition models based on the analysis of voice audio recordings.

Conclusion

Google DeepMind is not just a startup developer of another smart chatbot. The achievements and prospects of this company go far beyond the tech industry. Over its 13 years of activity, it has introduced many innovative technologies based on artificial intelligence for various industries, from molecular biology to video games. DeepMind not only opens up truly exciting prospects for the application of AI. It also sets the stage for its future unification with all of humanity. A key role in this merger may well be played by the newly released super-powerful and super-capable Gemini neural network, aptly named “the machine for everything”.

Personalized responses to new clients from Facebook/Instagram. Receiving data on new orders in real time. Prompt delivery of information to all employees who are involved in lead processing. All this can be done automatically. With the SaveMyLeads service, you will be able to easily create integrations for Facebook Lead Ads and implement automation. Set up the integration once and let it do the chores every day.